Caller IDs for Whales

Crowd-sourcing helps sort marine mammal vocalizations

Imagine extraterrestrials come to Earth, seeking to understand human life. They dangle recording devices beneath the clouds or occasionally tag people with retrievable recorders. They collect thousands of bits of conversations—from individuals and congregations of people, at cocktails parties, Thanksgiving gatherings, subway platforms, baseball stadiums, and bedrooms.

They have mounds of data, but in a language they don’t understand. Yet within those mounds are patterns—repeated phrases, sounds, inflections, rhythms. Unraveling those patterns is a key to revealing what humans are saying and doing.

Now imagine the poor extraterrestrial whose job it is to sort through all those snippets of sound and identify the patterns.

Marine mammal scientists face a similar situation. They have collected troves of recordings of calls from pilot whales, killer whales, dolphins, and other marine mammals. Many of these calls have similar acoustic features that biologists can categorize into call types.

But examining and comparing thousands of recorded calls and making careful judgments on how to sort them consumes huge chunks of time. I got a taste of this during a fellowship in 2012 at Woods Hole Oceanographic Institution (WHOI), where I spent hundreds of hours over several weeks making 3,127 comparisons of my own. [See and hear dolphin calls.]

Confronted with the task of categorizing more than 4,000 pilot whale calls, WHOI research specialist Laela Sayigh and colleagues from the University of St. Andrews seized the idea of plugging into the power of crowd-sourcing. Team member Alexander Von Benda-Beckmann remembered Zooniverse, a citizen-science hub that asked the public to help classify galaxy images from NASA’s Hubble Space Telescope according to their shapes. He realized that the same could be done for marine mammal calls, which led to the creation of Whale FM, a website that asks people “to help us understand what whales are saying.”

“We didn’t have to round up volunteers,” Sayigh said. They came to the scientists.

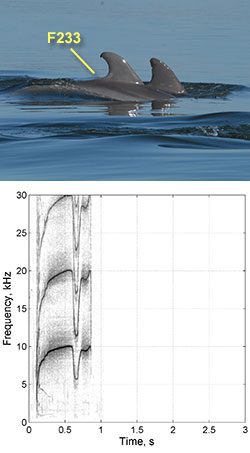

Since the fall of 2011, more than 10,000 people have linked to Whale FM to listen to recorded whale calls and look at their spectrograms, or visual representations of the sounds. They compare a given call with a list of other spectrograms, clicking a “✓” next to calls that are similar, or an “X” button to discard calls that don’t match. Visitors from around the world, averaging 30 call matches per visit, have categorized nearly 200,000 calls—not for cash, prizes, or even recognition.

“People seem to feel rewarded,” Sayigh said—by helping science, or furthering understanding of marine mammals, or perhaps by just the game of “spot-the-difference.”

“I think there’s an internal joy in listening to animal calls,” WHOI scientist emeritus Peter Tyack said.

Scientists have been trying to automate the classification process, and early work suggests that mathematical algorithms may work. University of Tennessee researcher Arik Kershenbaum is experimenting with using a human music recognition code for use in dolphin whistle classification.

Still, even the most advanced computers tend to overlook or can’t discern subtle features of the vocalizations, Sayigh said—features that are clearly important, because they appear over and over again. Dolphins, for example, retain tiny blips in their whistles from year to year, and individuals who copy their whistles retain these features as well. Sometimes computers will conclude that two whistles came from different dolphins, when they actually came from the same dolphin who just varied certain features, such as the duration, of its whistle. And sometimes a dolphin decides to loop its whistle several times, making a single long, multi-looped whistle; a computer program comparing a three-loop to a four-loop whistle will show those whistles as very different, Sayigh said—even if those two whistles were in fact from the same dolphin and, whose spectrograms, to a human observer, had obviously identical contours.

Unlike computers, people can distinguish such things, and both Tyack and Sayigh are confident that at this stage, the human brain trumps mathematical algorithms to sort out marine mammal vocalizations.

Not everyone agrees. Some of the strongest criticisms of the human visual inspection technique came from a 2001 paper published in the journal Animal Behavior by scientists Brenda McCowan and Diana Reiss. They said human visual inspection of spectrograms was subjective and could not stand up to “more objective quantitative methods.”

Six years later, Sayigh and colleagues issued a rebuttal in the same journal, validating human visual inspections and showing that even naive judges were very adept at grouping together whistles with similar contours; indeed, they almost always grouped together whistles from the same individuals without knowing that the whistles were from the same individuals.

“Since that paper has been published I have really not experienced criticisms of using visual categorization,” Sayigh said.

Another study, published in 2012 by Sayigh, Tyack, and colleagues in the journal Marine Mammal Science, compared their own call categorizations with those made by Whale FM citizen-scientists. The two groups, one novice and the other highly experienced, matched at least 74 percent of the time.

“Humans are unbelievably good at perceiving complex patterns,” Tyack said.

Whale FM is sponsored by Scientific American magazine and the University of St. Andrews. Sayigh’s and Tyack’s research was funded by the Office of Naval Research. Thean’s research as a WHOI Summer Student Fellow was funded by WHOI, the Becky Colvin Memorial Award, and Princeton Environmental Institute.

See and hear dolphin calls

Click to enlarge these images of dolphins, listen to whistles recorded by temporary tags on the dolphins, and see spectrograms,or visual representations, of each dolphin’s whistle. (Photos courtesy of Chicago Zoological Society/Sarasota Dolphin Research Program, taken under National Marine Fisheries Service Scientific Research Permit No. 15543.)

Slideshow

Slideshow

To learn about the behavior of pilot whales and other marine mammals, scientists have recorded the animals' underwater calls and vocalizations. But sorting through thousands of recordings to identify what animals made them is a time-consuming task. [See and hear dolphin calls.] (Courtesy of CIRCE (Conservation, Information and Research on Cetaceans) under National Marine Fisheries Service Permit #14241)

To learn about the behavior of pilot whales and other marine mammals, scientists have recorded the animals' underwater calls and vocalizations. But sorting through thousands of recordings to identify what animals made them is a time-consuming task. [See and hear dolphin calls.] (Courtesy of CIRCE (Conservation, Information and Research on Cetaceans) under National Marine Fisheries Service Permit #14241)- With more than 4,000 recordings of pilot whales like these, researchers seized the idea of plugging into the power of crowd-sourcing, leading to the creation of Whale FM (http://whale.fm/), a website that asks people "to help us understand what whales are saying." (Photo by Frants Jensen, Woods Hole Oceanographic Institution, under National Marine Fisheries Service Permit #14241.)

- WHOI research specialist Laela Sayigh and colleagues from the University of St. Andrews say that people using the Whale FM website are helping them categorize the large number of marine mammal vocalizations the scientists have recorded. (Tom Kleindinst, Woods Hole Oceanographic Institution)

Related Articles

Topics

Featured Researchers

See Also

- Marine Mammal Center at WHOI

- Seismic Studies Capture Whale Calls from Oceanus magazine