The Squid, the Whale, and the Grad Student

A young scientist deciphers meaning embedded in sonar signals

On the Serengeti Plains of Africa, lions stalk their prey mainly by sight. Scientists studying them also use their eyes to observe the hunt and indeed the entire ecosystem. They can take in the whole panorama, easily distinguishing lions from hyenas, zebras from gazelles, acacia trees from termite hills and savannah grass.

Pity the marine scientist who would like to do the same in the dark depths of the ocean.

Most marine predators cruising the deep oceans rely on senses other than sight to find their prey. Many cetaceans, specifically toothed whales and dolphins, send out pings of sound that reflect back from objects in the depths, like submarines tracking enemies with sonar. Occurring hundreds of meters below the surface, the chase is invisible to us. Unable to watch the whales in action, scientists have only recently been able to listen in and record the sounds of the hunt.

But listening in isn’t enough; we also have to know what we’re hearing. It’s hard to figure out what’s going on down there if you can’t make head or tail (or tentacle or fish scale) of the signals coming back. What does the echo from a tasty, protein-packed squid sound like? How does it differ from that of a jellyfish or a piece of trash?

In other words, how do cetaceans recognize dinner when they hear it?

That’s one of the toughest problems in acoustic oceanography, says Peter Tyack, a marine biologist at Woods Hole Oceanographic Institution (WHOI). “The whales will swim past hundreds of targets before they decide to feed on one,” he said. “We have no idea why they choose the one they do.”

Wanted: biologist/engineer

Fisheries managers face a similar dilemma trying to monitor populations of commercially valuable species ranging from herring to squid. Conventional sonar “fish-finders” can detect creatures in the water below, but are not very good at identifying what they are.

What these situations really need is a marine biologist familiar with whale behavior who also knows her way around acoustic signal analysis. But how many scientists are skilled and trained in these diverse fields?

In Wu-Jung Lee, WHOI has found one. As an undergraduate, she majored in both zoology and electrical engineering before entering the MIT/WHOI Joint Program in 2007.

At WHOI, her advisors include Tyack, in the Biology Department, and acoustic scientists Tim Stanton and Andone Lavery in the Applied Ocean Physics and Engineering Department. Stanton and Lavery are exploring new methods of using sound to search for marine life in the oceans and to identify individual species from their sonar echoes. Whales essentially do the same to hunt for and identify their prey, a behavior that Tyack studies.

Tyack said that while many of his students eventually combine the two fields, usually they come in with a background in life sciences and then, through working with WHOI scientists and engineers who specialize in acoustics, learn something of that discipline. “I think Wu-Jung, with the engineering training she has already, can attempt very complicated approaches,” he said—to solve mysteries of how marine animals use sound and how humans can use sound to observe deep-sea animals.

Finding her niche

Growing up in Taiwan, Lee always knew she wanted to study biology. As a child she liked being out in the forest, getting to know creatures like the little snails that lived among the fallen leaves. Then in junior high, a teacher opened her eyes to the joys of physics.

At National Taiwan University, Lee pursued both. In particular, she studied signal processing, a subspecialty of electrical engineering and applied mathematics that deals with analysis of signals, whether they carry biological data, such as electrocardiograms, or communications data, such as radio signals.

Lee had no problem carrying a demanding double major. Figuring out what to do as a career was a different story. There were plenty of choices in biology and plenty more in engineering, but finding a career that involved both? That was hard.

Then she discovered the whales.

The summer after her freshman year, Lee got a job as a guide on a dolphin-watching boat. It was her first real experience with the ocean, and despite being prone to seasickness, she loved being out on the water. She especially liked learning more about cetaceans. She joined a society for marine mammal conservation and volunteered with a dolphin-stranding network. As she became familiar with research on dolphin communication, she realized that her signal processing background was great preparation for studying how cetaceans use sound. After graduating in 2005, Lee landed research internships with an acoustics lab in Singapore and a cetacean lab in Hawaii, and in 2007, she began her work at WHOI.

All echoes are not alike

The first phase of Lee’s research focused on the sonar echoes that reflect off squid, a favorite prey of many kinds of dolphins and toothed whales. She hoped that by figuring out what information whales get from the echoes, she could begin to understand how they know whether or not a given echo comes from a potential meal.

“We have to know what the whales are actually looking at, through the echo, before we can say what they are using to catch their prey,” she said.

Besides providing a better understanding of whale echolocation, Lee’s work will also help resource managers who hope to use sonar to identify squid in the wild. Stanton said there’s currently no good way to monitor squid populations. Conventional sonar “fishfinders” send out a narrow-band signal at a single frequency, such as 120 kilohertz (kHz), that provides little information when it echoes back from the target. They don’t tell you what they’ve found, only that something is swimming around down there.

The key to getting more information from sonar echoes is to start with sonar signals that contain more information in the first place, said Stanton. Just as light spans a spectrum of wavelengths representing colors, sound waves can be transmitted over a wide range of frequencies; the greater the range of frequencies you transmit, the greater the variety and detail of sound signals—and potential information—you get back.

Which frequencies you transmit also makes a difference. The strength of the echoes from objects in the ocean strongly depends on the frequency of the sound that was transmitted. High frequencies (above about 100 kHz) are valuable for detecting minute objects such as zooplankton. Lower frequencies are valuable for detecting larger items, such as fish and squid, and are commonly used by hunting cetaceans.

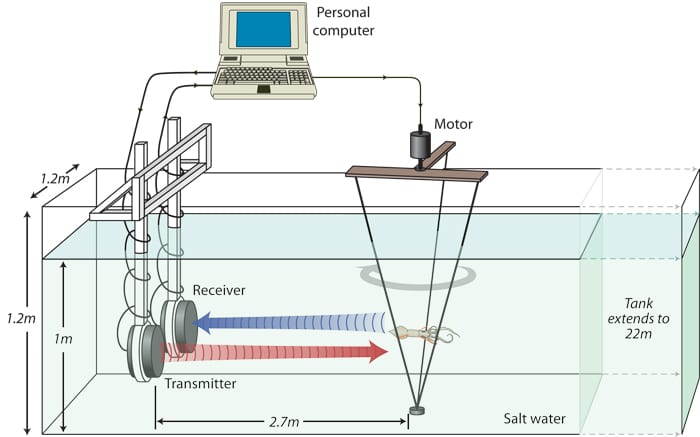

Over the past two decades, Stanton and Lavery have pioneered the use of broadband sonar comprising many frequencies to identify creatures ranging from zooplankton to herring. Lee applied their methods to squid. She aimed broadband sonar pings, in the same range of frequencies many whales use for hunting, at captive squid (Loligo pealeii). She captured their echoes with an underwater microphone, which converted the sound into electronic signals and recorded them in a computer for various types of analysis.

Echoes from every angle

One big problem with interpreting sonar data is that echoes from one object can be very different depending on how the object is oriented relative to the incoming sound waves. The echo from a squid swimming across a whale’s field of view will differ from the echo from a squid swimming away from or toward the whale.

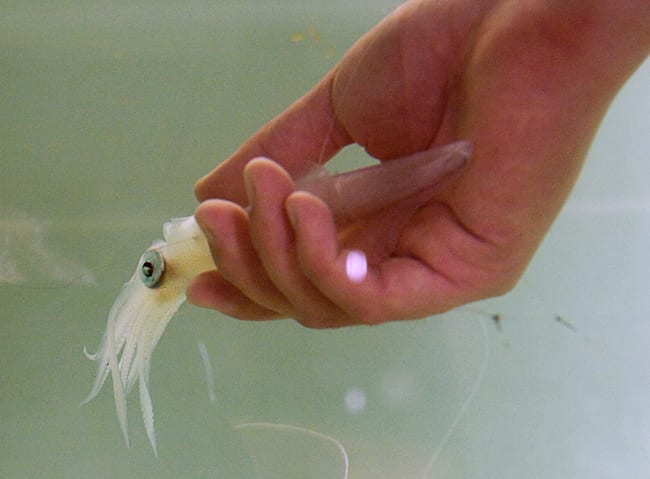

Lee solved that problem by devising an experimental apparatus to rotate a squid so the sound waves would strike it from various angles. That sounds simple enough, but it was actually one of the most challenging parts of the project. Squid aren’t easy to deal with in the lab; they’re shy, slippery, and sensitive to over-handling.

“It took me a while to figure this out,” Lee said. First she sedates the squid by soaking the animal in a solution of magnesium chloride. When they are properly knocked out, she runs two monofilament lines through their mantle, the muscular sheath around the body. Then she attaches the monofilaments to a frame that can be rotated through a full circle, one degree at a time. By the time the squid awakens, it is securely tethered and reflecting back echoes from her sonar pings from various angles.

So far, so good. But the raw data revealed little. The signals were just too complicated, each one a welter of random-looking squiggles. That’s where Lee’s signal processing experience came in. Using a set of mathematical “filters,” she was able to enhance the parts of the signal that were meaningful and reduce the meaningless “noise.” Not only did she develop a clear picture of what squid sonar echoes sound like, she was able to glean incredibly detailed information from them.

“These echoes that come back actually help us to identify exactly where the echo comes from in the squid body,” she said.

Subtleties of sound

In most cases, the echoes depended on where on the squid the pings hit, which in turn depended on the squid’s orientation. Pings that hit the squid broadside along its muscular, tubular body sent back very consistent echoes, but pings that reflected from other angles sent back echoes that varied a lot from one ping to the next. Lee’s analysis showed that the echoes varied depending on the positioning and shape of the squid’s flexible, ever-changing tentacles, which extend forward from the head.

Lee’s tethering system also enabled her to improve on previous studies of squid acoustics by testing live animals. Most earlier work used dead squid, for ease of handling. Lee discovered, though, that sonar signals reflected from dead and live squid were dramatically different.

“Something did change significantly right after the animal died,” Lee said. She theorizes that proteins in the squid’s muscle change quickly after death, altering the echoes.

Her research confirmed that a computer model developed by former Joint Program/Navy Master’s student Ben Jones (M.Sc., 2006) did a good job of simulating how sound would reflect off squid. Her tests with actual squid determined the range of conditions—squid shape and orientation—over which the model is valid, bringing it closer to the day it can be used by resource managers to interpret sonar signals echoing off squid in the wild.

Putting it all together

Lee’s success in deciphering squid echoes means she’s now able to tackle the big question: How do cetaceans find and identify their food?

In January, she went to Honduras to lay the groundwork for experiments at a facility that has captive dolphins in a naturalistic setting. She was delighted to find very low levels of background noise, which she attributed mainly to low numbers of snapping shrimp in the area. She also established lines of communication with the facility’s trainers, who over the next several months will get the dolphins accustomed to wearing a D-tag, a device that temporarily clings to the skin via suction cups and records each animal’s orientation, depth, and direction of movement as well as incoming sonar echoes from potential prey.

Lee hopes to return next fall to observe the dolphins’ behavior in the presence of potential prey and record their echolocation sounds, echoes from the prey, and the dolphin’s movements during each encounter.

Tyack said those experiments, informed and guided by what Lee discovered about squid acoustics in the first phase of her research, should reveal a lot about how dolphins use sound to identify different kinds of prey and how they modify their sonar signals and their swimming behavior as they try to decide whether to eat a particular prey animal.

“My hunch is that it’s almost impossible for this not to yield really interesting information,” he said.

Wu-Jung Lee was supported by the WHOI Academic Programs Office and by a scholarship from the Taiwanese government. The squid she worked with were provided by Roger Hanlon of the Marine Biological Laboratory, Woods Hole, Mass.

From the Series

Slideshow

Slideshow

WHOI/MIT Joint Program graduate student Wu-Jung Lee adjusts the experimental apparatus that allows her to record sonar echoes from a squid at different orientations. In 2009 Lee won recognition for her acoustical research on squid at two international conferences, the Acoustical Society of America and the Animal Sonar Symposium in Kyoto, Japan. (Photo by Tom Kleindinst, Woods Hole Oceanographic Institution)

WHOI/MIT Joint Program graduate student Wu-Jung Lee adjusts the experimental apparatus that allows her to record sonar echoes from a squid at different orientations. In 2009 Lee won recognition for her acoustical research on squid at two international conferences, the Acoustical Society of America and the Animal Sonar Symposium in Kyoto, Japan. (Photo by Tom Kleindinst, Woods Hole Oceanographic Institution) Wu-Jung Lee holds a squid (Loligo pealii) that has been anesthetized in a solution of magnesium chloride. She will place the squid in a frame that rotates through a full circle one degree at a time, allowing her to direct sonar pings at the squid from 360 different angles. Underwater microphones will then detect the echoes that bounce off the squid from each angle. (Photo by Tom Kleindinst, Woods Hole Oceanographic Institution)

Wu-Jung Lee holds a squid (Loligo pealii) that has been anesthetized in a solution of magnesium chloride. She will place the squid in a frame that rotates through a full circle one degree at a time, allowing her to direct sonar pings at the squid from 360 different angles. Underwater microphones will then detect the echoes that bounce off the squid from each angle. (Photo by Tom Kleindinst, Woods Hole Oceanographic Institution) Student Wu-Jung Lee analyzed sonar echoes reflected from squid. She is trying to determine how dolphins and toothed whales use sonar to distinguish squid, which they eat, from other animals that they do not eat. In her experiments, she directed sonar pings at a squid suspended from a rotating frame. An underwater microphone received the echoes that bounced off the squid and recorded them into a computer for later analysis. Since dolphins and whales don't always encounter squid from the same direction, Lee wrote a program that enabled the computer to turn the squid through a full circle, one degree at a time. That allowed her to compare echoes from squid that were at different orientations with respect to the sonar source. (Illustration by Jack Cook, Woods Hole Oceanographic Institution)

Student Wu-Jung Lee analyzed sonar echoes reflected from squid. She is trying to determine how dolphins and toothed whales use sonar to distinguish squid, which they eat, from other animals that they do not eat. In her experiments, she directed sonar pings at a squid suspended from a rotating frame. An underwater microphone received the echoes that bounced off the squid and recorded them into a computer for later analysis. Since dolphins and whales don't always encounter squid from the same direction, Lee wrote a program that enabled the computer to turn the squid through a full circle, one degree at a time. That allowed her to compare echoes from squid that were at different orientations with respect to the sonar source. (Illustration by Jack Cook, Woods Hole Oceanographic Institution) WHOI/Joint Program student Wu-Jung Lee found that the sonar echo from a squid depends on where the sound waves hit the squid. Echoes of sonar pings that hit a squid broadside (left) are very consistent and differ a lot from echoes of sonar pings that hit at other angles, such as head-on (right).

WHOI/Joint Program student Wu-Jung Lee found that the sonar echo from a squid depends on where the sound waves hit the squid. Echoes of sonar pings that hit a squid broadside (left) are very consistent and differ a lot from echoes of sonar pings that hit at other angles, such as head-on (right).

These photos show the squid Sepioteuthis sepioidea, which may or may not be eaten by dolphins or toothed whales. (Photos by Roger Hanlon, Marine Biological Laboratory) Through her analysis of sonar echoes from squid, WHOI/MIT Joint Program student Wu-Jung Lee found that the shape made by the squid's tentacles greatly influences how sound waves reflect off the squid. A squid with its tentacles folded (left) produces a very different echo than a squid with its tentacles open or splayed (right).

Through her analysis of sonar echoes from squid, WHOI/MIT Joint Program student Wu-Jung Lee found that the shape made by the squid's tentacles greatly influences how sound waves reflect off the squid. A squid with its tentacles folded (left) produces a very different echo than a squid with its tentacles open or splayed (right).

These photos show the squids Sepioteuthis sepioidea and Histioteuthis sp., which may or may not be eaten by dolphins or toothed whales. (Photos by (left) Roger Hanlon, Marine Biological Laboratory and (right) Larry Madin, Woods Hole Oceanographic Institution)

Related Articles

Topics

Featured Researchers

See Also

- Listening for Telltale Echoes from Fish Work by Tim Stanton finds that sound waves resonating off swim bladders offer a new way to count fish. from Oceanus magazine

- New Sonar Method Offers Window into Squid Nurseries Using sonar to locate and study squid nurseries.

- Run Deep, But Not Silent An article by Peter Tyack on tracking whales in the ocean's depths.

- Pilot Whales?the 'Cheetahs of the Deep Sea' Researchers reveal first glimpse of whales' high-speed, deep-diving hunts for squid.

- Ocean Acoustics Lab Learn more about how researchers at Woods Hole Oceanographic Institution are using acoustics to measure ocean properties and to detect living things and geological features in the ocean.